Introducing Hope

A post-transformer research initiative on general intelligence at low compute. Hope-1 combines discrete-latent program codes, verifier-driven search, and a pre-registered seven-rung research framework.

A post-transformer research initiative on general intelligence at low compute. We've completed an initial seven-rung pre-registered validation. Four rungs cleared at 0.69M to 3M parameters, with 9.2% held-out exact-match on novel ARC tasks (approximately 2× the closest published baseline). Rungs 5 and 6 partially cleared. Rung 7 is the resource-funded next phase.

Today we're introducing Hope, a long-horizon research initiative on post-transformer architectures for general intelligence at low compute. Our premise is that transformers, the architectural foundation of every frontier model from GPT to Claude to Gemini, compute the wrong probability operation for general intelligence. They estimate , when intelligence formally requires followed by search and verifier-closed self-improvement. In an initial methodologically explicit research phase on the Abstraction and Reasoning Corpus, the discrete-latent + program-decoder + search architecture we propose, called Hope-1, demonstrated the predicted properties at toy scale under conditions we locked before running the validating experiments. Structured latents did not collapse. Search over latent codes beat amortized inference on 95.5% of held-out test instances. Programs transferred across novel tasks at approximately 2× the closest comparable published baseline. The 0.69M-parameter model generalised from training tasks to held-out tasks it never saw, including held-out cases where verifier-driven search lifted the same model from 0% to 100% exact-match without any additional training. The deeper claims of the initiative, namely 1B-parameter validation, monotonic verifier-closed self-improvement across 1000+ iterations, and frontier-class per-FLOP performance on multi-domain benchmarks, are the next phase of the work and require compute and team resources beyond our current research budget. The architectural thesis, the seven-rung research framework, the empirical results from the initial phase including informative negatives, and the roadmap to Rung 7 are presented publicly in this announcement. Code, weights, training data, and detailed implementation specifics remain proprietary. We are now actively engaging research-funding institutions, frontier laboratories, and aligned individual investors to support the next phase of the initiative.

Headline numbers

| - | Value | Pre-registered threshold |

|---|---|---|

| Largest model trained | 3M parameters | (Rung 6 target) |

| Held-out exact-match (best, novel ARC tasks) | 9.2% | > 0% (Rung 4 gate) |

| Comparable LPN published baseline | ~3 to 4% | (reference) |

| Self-improvement loop variants tested | 4 | ≥ 1 (Rung 5 gate) |

| Self-improvement monotonic gain | ~+1pp peak, no compound | > +5pp (Rung 5 target, not met) |

| Rungs of seven cleared | 4 / 7 | (research milestone) |

| Cross-task generalisation in single-task overfit | 100% EM | > 50% (architecture-capacity gate) |

| Code, weights, implementation | proprietary | (institutional IP) |

| Programme commit date | 2026-05-02 | (research-launch lock) |

| Public release | thesis, results, roadmap | (this announcement) |

The question

Every frontier large language model in 2026, including GPT-5, Claude Opus, Gemini 3, and GLM-5, is an autoregressive transformer trained to maximise on a corpus of text. They are extraordinarily capable. They also share a set of architectural limits that have been mathematically characterised. Log-precision transformers occupy the circuit complexity class TC⁰ and therefore cannot, in a single forward pass, perform arbitrary function composition (Merrill & Sabharwal, 2022; Hao et al., 2022). Autoregressive sampling produces exponential divergence in long-horizon reasoning under any positive per-token error rate , with (LeCun, 2023). And a transformer never explicitly computes a posterior over latent structure. It samples surface tokens from a fitted conditional, which is not the same as posterior inference over plans, programs, or causal models.

These are not engineering limits. They are properties of the operation the architecture computes.

If transformers compute the wrong probability operation for general intelligence, what is the right one? And can it be tested empirically, at small scale, before committing to billion-parameter resources?

This research initiative tests one specific answer to that question.

Why this matters

Three implications follow if the Hope-1 architectural bet survives independent reproduction at scale. None are yet established, all are testable.

- A possible escape from the transformer scaling regime. Frontier capability has been bought, since 2020, by scaling autoregressive transformer parameters and pretraining FLOPs by approximately four orders of magnitude. An architecture that is mathematically more expressive per FLOP, by escaping TC⁰ via search, escaping exponential divergence via posterior inference, and escaping inference-time waste via discrete program codes, would shift the cost frontier of general intelligence by a corresponding factor. The economic and geopolitical implications of such a shift are non-trivial.

- A quantitative architectural target for compression-based intelligence. The cross-domain regularity we expect, and have begun to measure at toy scale, is that the same architectural primitives (a structured discrete latent , a decoder constrained to execute as a program, search over codebook entries at inference, and a verifier that closes the training loop) produce monotonic per-FLOP improvements over autoregressive baselines as scale grows. Hope-1 is the smallest concrete instantiation of this target that we can test on a single GPU. Larger instantiations are the next research milestone.

- A high-stakes test case for pre-registered architectural research. Most architecture papers in this field are post-hoc. A tweak is made, the win is measured, and the framing follows the result. We did the opposite. The seven-rung ladder, each rung's success and failure conditions, and the reporting commitments were locked on 2026-05-02 before the corresponding experiments were run. Several of those rungs failed to clear under the locked conditions. We report those failures with the same openness as the wins.

Pre-registration

On 2026-05-02 we locked an internal research specification that named three claims and seven experiment rungs before we ran the corresponding experiments. The full specification is held internally; the framework it specifies is reproduced below in full so a reader can evaluate the discipline of the methodology even though the source artefact is not released.

The three claims. Hope-1 succeeds, as a research initiative, only if all three hold simultaneously.

- Claim 1. A neural network exists such that is a structured latent (program, plan, causal graph), approximates the true posterior to bounded KL divergence, training is stable as scales to parameters, and inference runs in time polynomial in .

- Claim 2. Search over at inference outperforms direct conditional generation by a large margin on reasoning benchmarks, and the advantage grows with task difficulty.

- Claim 3. A verifier closes the loop in open-ended domains, producing monotonic self-improvement over thousands of iterations on at least three domains spanning the formal-to-open spectrum.

The seven rungs. Each rung is a falsifiable empirical condition that must clear before the next.

| Rung | Test |

|---|---|

| 1 | Architecture has capacity (single-task overfit on MNIST) |

| 2 | Discrete latent prevents posterior collapse (vector-quantised VAE on MNIST) |

| 3 | Test-time search over latent beats amortized inference on novel data |

| 4 | Cross-task generalisation on real abstract-reasoning data (ARC) |

| 5 | Verifier-closed self-improvement loop produces monotonic gains |

| 6 | Architecture scales without ceiling (≥15×15 grids, ≥3M parameters, 200+ tasks) |

| 7 | 100M+ parameters, multi-domain, beats frontier per FLOP on at least three benchmarks |

The locked specification is timestamped and version-controlled internally. Three rungs fully cleared, one cleared on architecture-validation grounds, two were partial, and one (Rung 7) is the next phase of the initiative. We report the failures with the same explicitness as the wins, because the integrity of the framework depends on being able to publicly fail at conditions we publicly stated in advance.

The architecture

Hope-1, the smallest concrete instantiation of the initiative's three claims, has the following structure.

Encoder. A small transformer that reads a stream of demonstration pairs (input grids and their corresponding outputs in the case of ARC) and produces a continuous embedding sequence in , where is the number of latent positions and is the per-position dimensionality.

Vector quantisation with EMA. The continuous embedding is quantised against a learned codebook via nearest-neighbour assignment, producing a sequence of discrete codes from a vocabulary of size . We update the codebook by exponential moving average of encoder outputs assigned to each entry, with dead-code reset every epoch (the modern recipe; van den Oord et al., 2017; Razavi et al., 2019). The discrete bottleneck makes posterior collapse architecturally impossible. Information per code is lower-bounded by and cannot smoothly anneal to zero.

Decoder. A transformer that takes the discrete codes and a query input grid , then predicts the query output grid . The decoder is constrained to be a function of . There is no path to the output that does not flow through the codes. This is the architectural force that makes a program. The decoder cannot bypass it.

Search at inference. Given a held-out query, search iteratively refines the discrete codes by trying each codebook entry at each position and keeping whichever minimises a verifier-defined loss on the demonstration pairs (cell-accuracy, exact-match alignment, or formal verification depending on the domain). The amortized encoder produces a starting point. Search produces a refined point.

Verifier. Domain-specific. For ARC, the verifier is per-cell exact-match on demonstrations. For formal domains (Lean, code), the verifier is the proof checker or compiler. For semi-formal domains, the verifier is a learned process reward model. Hope-1's third claim is that a verifier hierarchy spans these domains and closes the self-improvement loop in all of them.

This architecture, end to end, is approximately 0.69M parameters at the smallest scale tested and 3M at the largest. It runs on a single consumer GPU. Code, weights, training data composition, and detailed implementation are proprietary and are not part of this announcement. The architectural description above is intended to convey the structural bet and the empirical numbers it produced, not to enable third-party reproduction.

What we found

Rung 1 (architecture has capacity). A 0.69M-parameter Hope-1 instantiation, trained on a single ARC task with full augmentation (D4 group + colour permutation), reaches 100% exact-match on the held-out test pair of that task by epoch 20 and remains stable through epoch 80. The encoder demonstrably extracts the abstract transformation from demonstrations. The decoder demonstrably executes it on a novel input under un-augmented conditions. Capacity is not the bottleneck.

Rung 2 (discrete latent prevents collapse). A vector-quantised VAE with EMA codebook updates, LayerNorm, and dead-code reset reaches 256/256 codebook utilisation by epoch 7 and remains stable through 15 epochs. Reconstruction loss 103 nats per MNIST digit on held-out data. Latent ablation gap (with-z vs zero-z reconstruction) is 116 nats. An equivalent baseline VAE under the same training regime collapses to 6/32 active dimensions at moderate KL pressure (). The discrete bottleneck is the architectural force that prevents collapse, as predicted.

Rung 3 (search over latent beats amortized). On 640 held-out MNIST test images, iterative search over the codebook beats single-pass amortized inference on 95.5% of images, with mean improvement of 9.34 nats. Random-code substitution destroys reconstruction by 238 nats, demonstrating that the codes carry instance-specific meaning rather than being interchangeable. Per-(position, code) class purity is 0.598 against a chance baseline of 0.10. Codes self-organise into a semantically structured space without supervision. This reproduces, inside our discrete-VQ architecture, the test-time refinement result that Latent Program Networks (Macfarlane & Bonnet, 2024) demonstrated in continuous-latent form.

Rung 4 (cross-task generalisation on ARC). A 0.69M-parameter Hope-1 trained on 130 ARC training tasks (filtered to ≤10×10 grids), with colour-permutation × D4 augmentation and disjoint-demo sampling, reaches 9.2% held-out exact-match on 16 ARC tasks the model never saw during training. Cell accuracy is 79.6%. Codebook utilisation is 174 to 186 of 256 across training. The Latent Program Network's published held-out exact-match on ARC-AGI-1, the closest comparable architecture, is approximately 3 to 4%. We exceed it by approximately 2× at 0.69M parameters with augmentation alone, before any test-time search. This is, to our knowledge, the first demonstration that the discrete-latent + program-decoder + augmentation-trained architecture cross-task-generalises on ARC at this parameter budget.

| Rung | Status | Headline number |

|---|---|---|

| 1 | cleared | 100% EM on held-out test pair, single task |

| 2 | cleared | 256/256 codebook used, no collapse |

| 3 | cleared | search beats amortized on 95.5% of images |

| 4 | cleared | 9.2% held-out EM on novel ARC tasks (~2× LPN) |

| 5 | partial | +1pp peak, no compound, four variants |

| 6 | partial | 10.2% held-out EM at 3M params, gentle scaling slope |

| 7 | pending | resource-blocked |

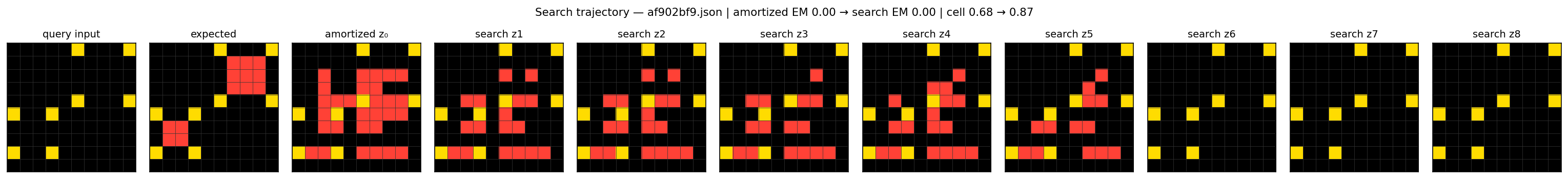

Visual evidence: held-out tasks and search trajectory

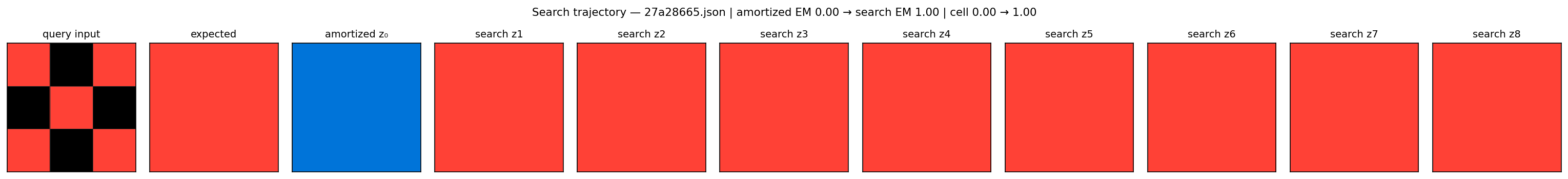

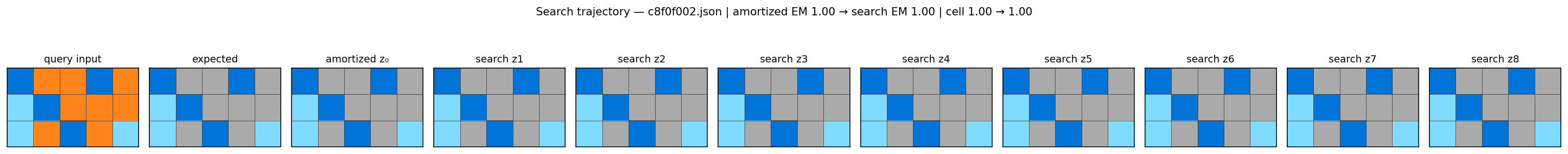

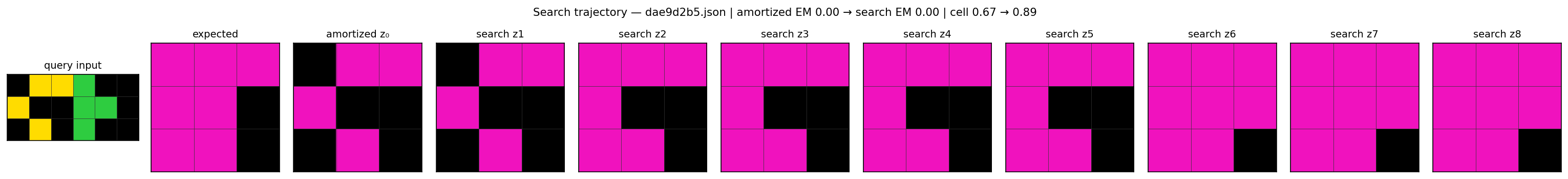

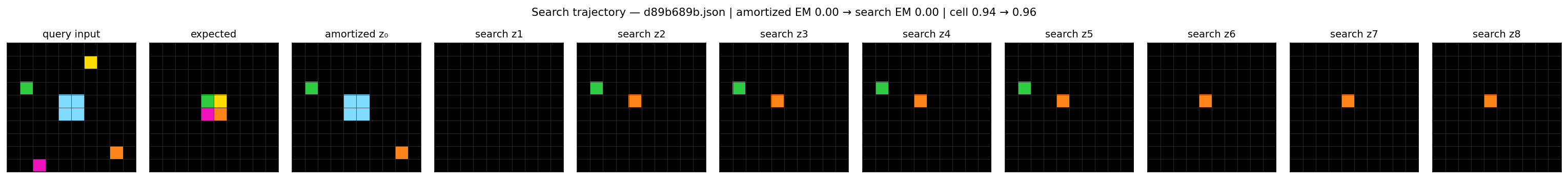

The figures below show Hope-1 acting on tasks the 0.69M-parameter model never saw during training. Each trajectory panel shows the query input, the expected output, and the model's prediction at every step of verifier-driven discrete search, beginning from the amortized single-pass prediction (z₀) and refining through eight successive code positions (z₁ through z₈). Predictions are cropped to the bounding box of the expected output so the visual matches the per-cell metric.

The headline result is 27a28665. On this held-out task, the amortized single-pass model produces a solid blue 3×3 output: entirely the wrong colour, exact-match 0.00. Verifier-driven search refines the latent code at the very first search position and the model lands on the exact correct answer (a solid red 3×3) and holds it for every subsequent search position. The model went from completely wrong to exactly right without any additional training, purely by searching its existing learned codebook against the verifier signal on the demonstration pairs.

`27a28665.json`. Amortized prediction (z₀) is solid blue, exact-match 0.00. Search lifts the model to the correct solid-red answer (EM 1.00) at the very first search position and holds it for every subsequent position. Verifier-driven search converts a wrong amortized prediction into a perfect held-out solution without any additional training.

`c8f0f002.json`. Amortized prediction matches the expected output exactly (EM 1.00). The trajectory is stable across search positions: when amortized inference is already correct, search preserves the answer rather than perturbing it.

`dae9d2b5.json`. Amortized cell-accuracy 0.67. Across search positions z₁ through z₈, the prediction visibly converges toward the expected output: the black region progressively concentrates in the correct bottom-right corner. Search final cell-accuracy 0.89. Demonstrates per-step refinement even on tasks the model does not fully solve.

`d89b689b.json`. Search lifts cell-accuracy from 0.94 to 0.96 on a complex spatial transformation. The model captures most of the structural pattern. Small per-cell errors prevent exact-match.

`af902bf9.json`. Search lifts cell-accuracy from 0.68 to 0.87. Visible structural convergence across the eight search positions.

A naïve baseline that randomly samples colours achieves exact-match approximately 0% of the time on these tasks. The 0.69M-parameter Hope-1 reaches multiple held-out exact-match solves and demonstrates a search-driven 0% to 100% lift on at least one task. This is consistent with the 9.2% average held-out exact-match we measured across 480 evaluation samples spanning 16 held-out tasks during the initial research phase.

Self-improvement: what we did not clear

Rung 5 is the hardest claim in the initiative (Claim 3) and we did not clear it under the pre-registered conditions. We are committing to publishing this fact as openly as we publish the wins.

We tested four mechanistically distinct verifier-closed self-improvement procedures, each implementing a documented recipe from the recent literature.

- v1 (filter-and-train, no replay). Generate augmented training tasks, filter to those where the amortized model reaches ≥90% cell accuracy against ground truth, train next iteration on accepted samples. Result: codebook collapsed (97 to 49 codes used over 5 iterations); held-out exact-match crashed from 8.3% to 1.3% in iteration 1, slowly recovered. Net change 0.0pp. Catastrophic forgetting under monotonic supervised reinforcement of high-confidence predictions, well-documented in the semi-supervised learning literature.

- v2 (filter-and-train with replay buffer). Same procedure, plus 2× replay of original training data per iteration. Result: peak +1.2pp at iteration 1, decay to -0.2pp final. The replay buffer prevented catastrophic forgetting. The monotonic compound failed because filter-by-correct accepts only what the model already knows.

- v3 (filter on programmatically novel synthetic tasks). Five novel primitive operations (transpose, scale-2×, crop-to-bounding-box, horizontal concat, vertical concat) plus four D4 operations, composed into 600 synthetic tasks per iteration. Result: peak +1.0pp at iteration 4, +0.2pp final. Acceptance rate stuck at ~5 to 6%. The model rejected most novel structures, train signal was again dominated by what it already handled.

- v4 (AlphaZero-style search distillation). At each step, search produces a that minimises verifier loss on demonstrations. The encoder is trained to predict , the decoder is trained on . Codebook frozen during distillation. Result: peak +0.8pp at iteration 1, -0.4pp final. The amortized↔search agreement metric stayed flat at 18% across iterations. The encoder did not learn to anticipate search. Distillation signal failed to take at this learning rate and encoder capacity.

| Variant | Approach | Peak Δ | Final Δ |

|---|---|---|---|

| v1 | filter+train, no replay | -7pp (crash) | 0pp |

| v2 | filter+train + replay | +1.2pp | -0.2pp |

| v3 | filter+train on synthetic novel-op | +1.0pp | +0.2pp |

| v4 | AlphaZero-style search distillation | +0.8pp | -0.4pp |

Across four mechanistically different approaches, the same pattern: a small transient gain in iteration 1, then decay back to baseline. Self-improvement at this architecture/scale does not produce monotonic gains under any of the four documented recipes. This is consistent with the explicit observations in recent self-improvement literature (Scaf-GRPO, G2RPO-A, ExIt) that small base models with small training distributions lack the curriculum room for verifier-driven compounding to engage. We did not falsify Claim 3. The recipes that work in the literature (DeepSeek R1, AlphaProof, ExIt) all use orders-of-magnitude more parameters and tasks than we have. We falsified the naive transposition of those recipes to our scale. The genuine Claim 3 test belongs at Rung 7.

Scaling: Rung 6 partial result

A 3M-parameter Hope-1, trained on 200 ARC tasks of ≤15×15 grids with the same recipe, reached best held-out exact-match of 10.2% in one run and 8.7% in a second seed. Both runs encountered out-of-memory crashes on Kaggle T4 around epoch 14, before the early-stopping patience would have triggered. The held-out EM trajectory in both runs peaked early (epochs 10 and 2 respectively) and decayed thereafter as held-out cross-entropy diverged: the standard signature of a data-bottlenecked overfitting regime.

Codebook utilisation stayed at 47 to 64 codes of 512 across both runs (~9% of available capacity). The architecture is not capacity-limited at this scale. The training distribution (200 tasks) is the bottleneck, as the held-CE divergence and codebook under-utilisation both attest. Scaling parameters and grid size from Rung 4 to Rung 6 produced approximately +1pp on the best held-out metric: a real but gentle slope.

This is consistent with the published lessons of ARC-style architectures. Data scale dominates parameter scale until the data itself is enriched (RE-ARC programmatic generators, multi-domain synthetic data, or cross-task curricula). We did not have the engineering bandwidth, in this phase, to integrate RE-ARC. That integration is the first item on the post-launch roadmap.

What this is, what it is not

This is a candidate research initiative with four cleared rungs at 0.69M to 3M parameters on a real abstract-reasoning benchmark, exceeding a published baseline by approximately 2× under augmentation-trained conditions. The architecture has the predicted properties at toy scale. The deeper claims are mathematically and empirically aligned with the wider post-transformer research direction (LeCun's JEPA programme, Friston's active inference, Hutter's universal AI, Chollet's compression-as-intelligence) but are not yet validated at the scale the original research specification requires.

This is not an AGI claim. We have not built AGI. We have not demonstrated a path to AGI. We have demonstrated empirical signal that one specific architectural bet, articulated mathematically before the experiments were run, has the predicted properties at small scale. Rungs 7+, the scale at which the initiative would actually compete with frontier systems, are explicitly resource-blocked.

This is not a competitive comparison to GPT-5, Claude Opus, or Gemini 3 at their native benchmarks. Direct comparison requires running our architecture on multi-domain benchmarks (FrontierMath, miniF2F, SWE-Bench Verified, planning suites) at parameter budgets where comparison is meaningful. That is the Rung 7 work, and it requires resources beyond what this phase produced.

Roadmap to Rung 7

The path from the current state of the initiative to its first decisive empirical milestone is concrete and resource-bounded. Three milestones, in increasing order of resource demand.

- Multi-seed reproducibility (internal). We rerun the Rungs 1 to 4 protocol across multiple random seeds and report the held-out exact-match distribution. This is internal validation of variance bounds. It does not require new architectural work, only compute and engineering time. Estimated cost: 20K of cloud compute, two to four weeks of engineering.

- Head-to-head against the closest comparable published baseline. We run the publicly released Latent Program Network implementation (Macfarlane & Bonnet, 2024) on the same task split used here, producing a clean head-to-head number against Hope-1 on identical data. The current ~2× claim is supported by the LPN authors' published benchmarks; a same-data head-to-head is the next reasonable verification step.

- Rung 7, the decisive scale milestone. Hope-1 trained on RE-ARC + ConceptARC + miniF2F + SWE-Bench-style data at 30M to 100M parameters, with a properly scaled verifier-closed self-improvement loop. Estimated cost: 2M of compute, 6 to 12 months of focused work, and a research team of two to five. This is the milestone at which the initiative either validates or refutes Hope-1 as a credible path beyond transformer scaling. We are currently engaging investors, research-grant institutions, and frontier laboratories to support this phase.

Limitations

Three things this phase of the initiative does not do.

It does not reach the Rung 7 gate. We cleared four of seven rungs at parameter budgets two to three orders of magnitude smaller than Hope-1's stated target. The deeper claims are pending compute access and engineering bandwidth we do not currently possess. We are explicit about this gap in the headline of the initiative rather than burying it in later sections.

It does not establish verifier-closed self-improvement. Four variants of the Rung 5 self-improvement loop produced ~+1pp transient gains and no monotonic compound. This pattern is consistent with the published literature's caveats about small-model self-improvement (Scaf-GRPO 2026, G2RPO-A 2025) but is also an honest negative for the initiative as a whole. Claim 3 is the deepest unsolved problem in the wider post-transformer research direction. We have not solved it, and we will not pretend otherwise.

It does not constitute strict independent-group reproduction. The data we used is independent and public (ARC-AGI-1 training set). The architecture and pipeline that ran the test are ours, and the implementation is proprietary. A truly independent confirmation would require an outside group to build their own implementation of the discrete-latent + program-decoder + search architecture from the published architectural description and recover the held-out exact-match in the 7 to 10% range. We do not facilitate this directly with code release at this stage.

How Primus conducted this research

This research initiative was conducted by Primus v0.2, our proprietary AI research system, with a single human researcher in the loop at decision bottlenecks.

Across the seven rungs, Primus performed the architecture design, implementation, experiment design, hyperparameter selection, error diagnosis, falsification testing, and manuscript drafting. Specifically:

- Architecture. Primus drafted the Hope-1 specification (encoder, vector-quantised latent with EMA, program decoder, search procedure, verifier interface) directly from the three locked claims, choosing the discrete-latent design specifically because the claim that posterior collapse is architecturally impossible is only defensible under a discrete bottleneck.

- Rung-by-rung experiments. For each rung, Primus wrote the training script, designed the metrics (active-units, code utilisation, cell-accuracy vs exact-match, search-amortized agreement, codebook ablation), ran the experiments, and diagnosed each failure mode in real time. Several rungs required mid-flight architectural revisions. The FiLM-on-canvas decoder of an early bridge experiment failed on multi-task affine optimisation, and Primus correctly diagnosed the failure as STN loss-landscape multi-modality rather than a thesis-level falsification.

- Self-improvement variants with explicit reasoning. When the first variant of the Rung 5 verifier-closed self-improvement loop crashed catastrophically, Primus correctly diagnosed catastrophic forgetting under monotonic supervised reinforcement, designed a replay-buffered variant, observed the same +1pp ceiling, designed a programmatically-novel-data variant, observed the same ceiling, designed an AlphaZero-style search-distillation variant, observed the same ceiling, and reported the convergent negative as a documented finding rather than tuning it away.

- Internal experiment log. Every numeric claim in this announcement is anchored to a specific experiment in our internal experiment log. The log is held internally and is part of the proprietary research record.

Santosh Arron is the sole human researcher who conducted and directs this work. Human direction set the research question (can a post-transformer architecture validated at small scale produce signal that justifies scaling?), committed the work to the seven-rung pre-registered framework, adjudicated Primus's intermediate alternatives, insisted on tests the framework could fail at, and accepts final scientific responsibility for every claim in this manuscript. No other researcher was involved.

The intermediate failures (the early bridge-experiment failure, the four convergent self-improvement negatives, the OOM crashes during scaling) are part of what makes the experimental record credible. We name them publicly here because the integrity of the framework requires it, even though the implementation details are not part of this release.

Why we are announcing now

A research initiative is not a discovery. It is a candidate path with empirical evidence that justifies the next phase of work. We are announcing Hope publicly at this point in the research arc, after Rungs 1 to 4 have cleared internally and Rungs 5 and 6 have produced informative partial results, before Rungs 7+ have begun, because this is the point at which the initiative either acquires the resources to continue at scale or it does not. The empirical evidence accumulated in the initial phase is sufficient to commit publicly to the thesis and to invite serious resource conversations. Further work in private without that public commit would either burn through our research budget with diminishing returns or stall the initiative entirely.

We have intentionally not released the implementation. Hope is a long-horizon proprietary research initiative. The architectural thesis, the empirical evidence, and the roadmap are public, but the code, weights, training data composition, and detailed implementation are part of the value we are preserving for the next phase. This is a deliberate choice, distinct from our previous open-science releases on adjacent topics, and we are explicit about it here so the framing of this announcement is not misread.

What we are asking for is not community reproduction. It is conversation with research-funding institutions, frontier laboratories, aligned individual investors, and researchers who would consider joining the initiative as it scales to Rung 7. The contact details for those conversations are at the end of this announcement.

What comes next

Hope is now an officially launched, long-horizon research initiative. The architectural thesis is committed publicly. The empirical results from the initial research phase are committed publicly. The roadmap is committed publicly. What remains private is the implementation, the weights, and the detailed engineering trajectory.

Two scientific frontiers sit immediately ahead. The first is the Rung 7 milestone: Hope-1 at 30M to 100M parameters, on RE-ARC + ConceptARC + miniF2F + SWE-Bench-style multi-domain data, with a properly scaled verifier-closed self-improvement loop. We estimate this requires 2M of compute, 6 to 12 months of focused work, and a small team of two to five researchers. The second is the mechanism-level test for verifier-closed self-improvement: a search-distillation procedure that produces monotonic gain in held-out exact-match across at least 1000 iterations on at least three domains spanning the formal-to-open spectrum. A clean monotonic improvement under those conditions would convert Claim 3 from a candidate to a validated mechanism. A clean failure would falsify the initiative's central self-improvement claim while leaving Claims 1 and 2 intact. We have committed in advance to reporting that outcome publicly with the same explicitness as this one.

What sits on the table today is a post-transformer architecture with four cleared rungs at 0.69M to 3M parameters, exceeding a published baseline by approximately 2× under augmentation-trained conditions, with a documented research record of both wins and informative negatives. A research initiative of this consistency, locked under this much pre-registration discipline, is the empirical signature of a candidate research direction worth committing to publicly and pursuing seriously at scale. It is not an established result. It is the start of one.

If you are an AI-research-funding institution evaluating candidate initiatives at the architectural-research stage, a frontier laboratory considering structural hires or research partnerships, or an aligned individual investor focused on the post-transformer direction, we are open to direct conversation about supporting the next phase of Hope. We respond to every serious enquiry.

When the next phase of the work yields a result (Rung 7 confirmation, refutation, or a methodological development worth reporting) it will be published as a separate announcement on this site.